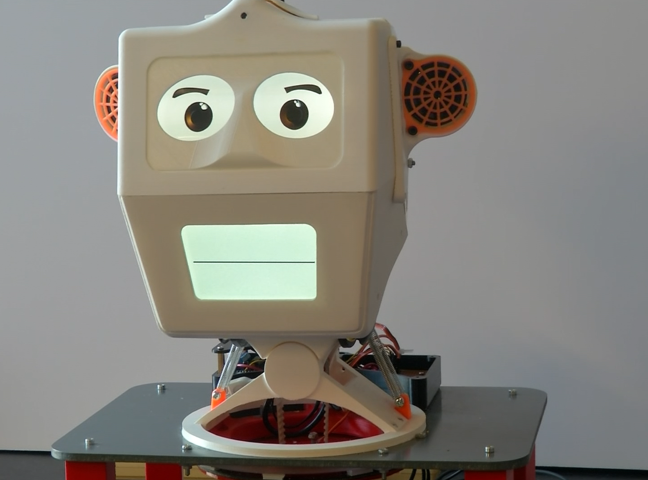

Social robot interface SII (2015-2017)

This work builds closely on previous efforts to develop effective and meaningful ways for people in to interact with robots. The underlying motivation for this work has been to develop a social interface (i.e. a robotic head) that is both capable of social interaction and is practical for use on a real robotic system. Therefore, the system must not only have the features and capabilities needed for social interaction, but it must also be lightweight, energy efficient, easily reconfigurable, adaptable to existing robot control architectures etc.

Sii is a light-weight, 3D-printed robotic head that can express emotions through two LCD display screens. The head is connected to a 3 degree of freedom neck mechanism, providing rotation in pitch, yaw and roll. Sii can communicate to people through facial expression and sound, using speakers located in the side of the head. Further sensing is achieved through a camera located above the top screen.

To date, two full design iterations have been undertaken. The newer embodiment was displayed at during the SUGAR project Expo which took place at Stanford University in Summer 2016.